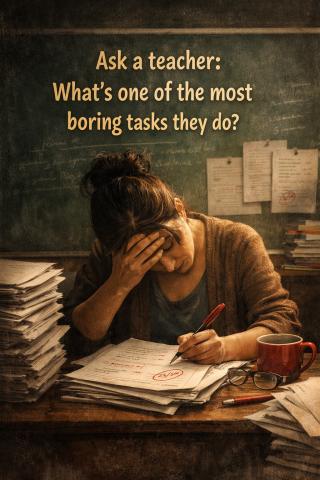

Ask a teacher: what’s one of the most boring tasks they do?

Ask a teacher: what’s one of the most boring tasks they do?

Invariably, the answer is—evaluating answer sheets.

Thousands of teachers across the country are engaged in this activity right now, as students appear for annual exams and move from one grade to another.

Why do most teachers find it least interesting? The answer lies in the emphasis on objectivity in evaluation. As long as a teacher is reading an answer sheet or assignment that she can discuss with students, she takes keen interest in it. The moment it is standardized—when teachers have no say in designing the question papers and no knowledge of whose answer sheet they are checking—it becomes a chore.

What remains the motivation then?

Finishing the task. In most cases, completing the evaluation of 20 answer sheets and uploading the marks on a given portal.

Here, a question arises: when a teacher is engaged in such standardized, criteria- and rubric-driven evaluation, why shouldn’t this task be given to machines? Anything that lacks subjectivity—machines can perhaps do better. In my workshops, teachers often ask: “Sir, if AI can do everything, when will it start evaluating answer sheets?” Till a few months ago, I didn’t have an answer. But now, tools like Claude can handle this task. Upload scanned answer sheets, set the criteria, and Claude can come up with an evaluation that, in many cases, may even appear more consistent than that of human teachers.

In my previous posts, I have questioned the need for objective evaluation. I argue that evaluation is a process of learning, and it must lie in the hands of the teachers who teach the children.

However, with AI around, how is reading an answer sheet detached from its subjective context any different from reading AI-generated content? What objective do we achieve by continuing with this form of evaluation?

Institutions of higher education have already begun to move away from it. They have developed their own systems to decide who gets access and who does not. Most institutions no longer rely solely on board results; admissions are increasingly based on other examinations, including CUET and institutional entrance tests.

The NEP has envisioned making board exams less stressful. However, I feel the need is to rethink them entirely—perhaps even scrap them. Think again: what purpose do they serve? Boards need to explore alternate ways of remaining relevant beyond conducting exams. They can become leading institutions of reform in assessment and evaluation. They may continue to certify learners, but must entrust teachers with the responsibility of assessing their students.

I am aware of the concern around bias that subjectivity may bring. But learning itself is deeply subjective. The question is not whether subjectivity should exist, but how to make it less biased and more meaningful. When a teacher knows whose answer sheet she is checking, and has the opportunity to discuss it with the student, evaluation becomes a process of teaching and learning.

Addressing bias remains an area we must think deeply about. But making evaluation objective in the AI era serves little purpose. After all, it is subjectivity that will survive. And by subjectivity, I mean this: when a teacher evaluates an answer sheet, she is able to visualise the child. She sees the imprint of her teaching. She notices a word or phrase the learner has picked up—something she once emphasised. She tracks the growth of an argument. She is surprised by a student’s example or analogy. All of this is possible only when the teacher knows whose answer sheet she is evaluating. Then, it no longer remains a chore. It becomes a meaningful exercise—one that teachers often carry into their conversations with colleagues, long after the marks have been uploaded.

- Log in to post comments